RAG Bottleneck 1 : Parsing

This article explores why document parsing is the first true bottleneck in most RAG systems. It breaks down how poor parsing corrupts the entire retrieval pipeline, from chunking to embedding to generation, and compares three main parsing approaches: text-based, layout-aware, and Vision Language Models.

Published on March 22, 2026

The hidden power of parsing in RAG systems

At UBIK, we've spent the past few years building AI systems, and there's something the RAG conversation keeps getting wrong. Everyone obsesses over embeddings, vector databases, and the latest models, but after digging into real performance bottlenecks, we've found that most people are missing something more fundamental. Most RAG implementations fail before they even get to retrieval. They break at parsing, the process of extracting structured meaning from documents. And because RAG is a sequential pipeline, broken parsing poisons everything downstream. The problem isn't just pulling text from a file. It's preserving the structure, context, and relationships that make information meaningful, both for understanding and for search. A complex PDF with tables, multi-column layouts, and embedded images can't simply be dumped into a vector database. Yet companies spend months fine-tuning embeddings and optimizing vector search, only to discover their parsing was broken from day one: tables mangled into incoherent streams, section relationships lost, critical context scattered across disconnected chunks. The consequences cascade fast. Retrieval can't surface the right information. Incomplete context triggers hallucinations. Your expensive language model ends up confidently explaining things that aren't true, not because of the model, but because of bad input that barely resembles the original document. In this article, we'll dig into why parsing has become the primary bottleneck in RAG systems, and how getting it right can turn a frustrating experiment into something that actually works at scale.

Decoding parsing: The backbone of RAG systems

So what does parsing actually mean? Document parsing is the process of converting complex, unstructured documents into clean, structured representations that preserve not just content, but context and relationships. When you read a research paper, you instinctively understand that a title differs from an abstract, that a footnote relates to specific content above it, and that a table is a table. Parsing needs to recreate that structural understanding for machines. Most systems don't. Instead of preserving structure, they shred it. Take a technical manual with structured procedures, cross-referenced sections, and formatted tables. A naive parser turns it into disconnected text chunks: a critical safety table becomes something like "Warning 150 Maximum Temperature Celsius Critical Shutdown", cross-references vanish, and diagram annotations scatter randomly. The document's meaning is gone before the AI ever sees it. PDFs make this especially brutal. They're fundamentally hostile to RAG systems; their internal structure rarely maps to logical reading order. What makes bad parsing so damaging is how deep it reaches into the pipeline. Chunking depends on parsing being able to distinguish headers from body text; if you get this wrong, your chunks are meaningless fragments. Embedding generation needs coherent, contextually meaningful text, feed it garbled output, and your vectors encode noise. Retrieval depends on semantically related content being grouped together, but if parsing scattered it across random chunks, similarity search can't save you.

Exploring parsing methods

So what are the options? Parsing approaches vary widely in how they handle document complexity, and the choice has a direct ceiling on everything downstream.

Text-based parsing

Text-based parsing is the most common starting point. Rather than using OCR, it works directly from the document's raw bytes, extracting whatever text is encoded in the file itself. It's fast and cheap, and on clean, well-structured documents it works fine. The problem is that most real-world documents aren't clean. Layout information lives outside the byte-encoded text, tables, columns, reading order, spatial relationships, and text-based parsers discard all of it. You get content, but stripped of the structure that gives it meaning.

Layout-aware parsing

Layout-aware parsing takes a more sophisticated approach, combining OCR with vision models in a multistage pipeline. The first stage uses vision models to detect and classify document regions, distinguishing headers from body text, identifying table boundaries, and locating figures. OCR then extracts text within each detected region, and a final stage reassembles everything into a structured representation that preserves spatial relationships. This is significantly more expensive and slower, but for complex documents, technical manuals, financial reports, anything with tables or multi-column layouts, the difference in output quality is dramatic. It also produces precise bounding boxes per content region, which enables richer document representation and makes it possible to trace information back to its exact location in the source.

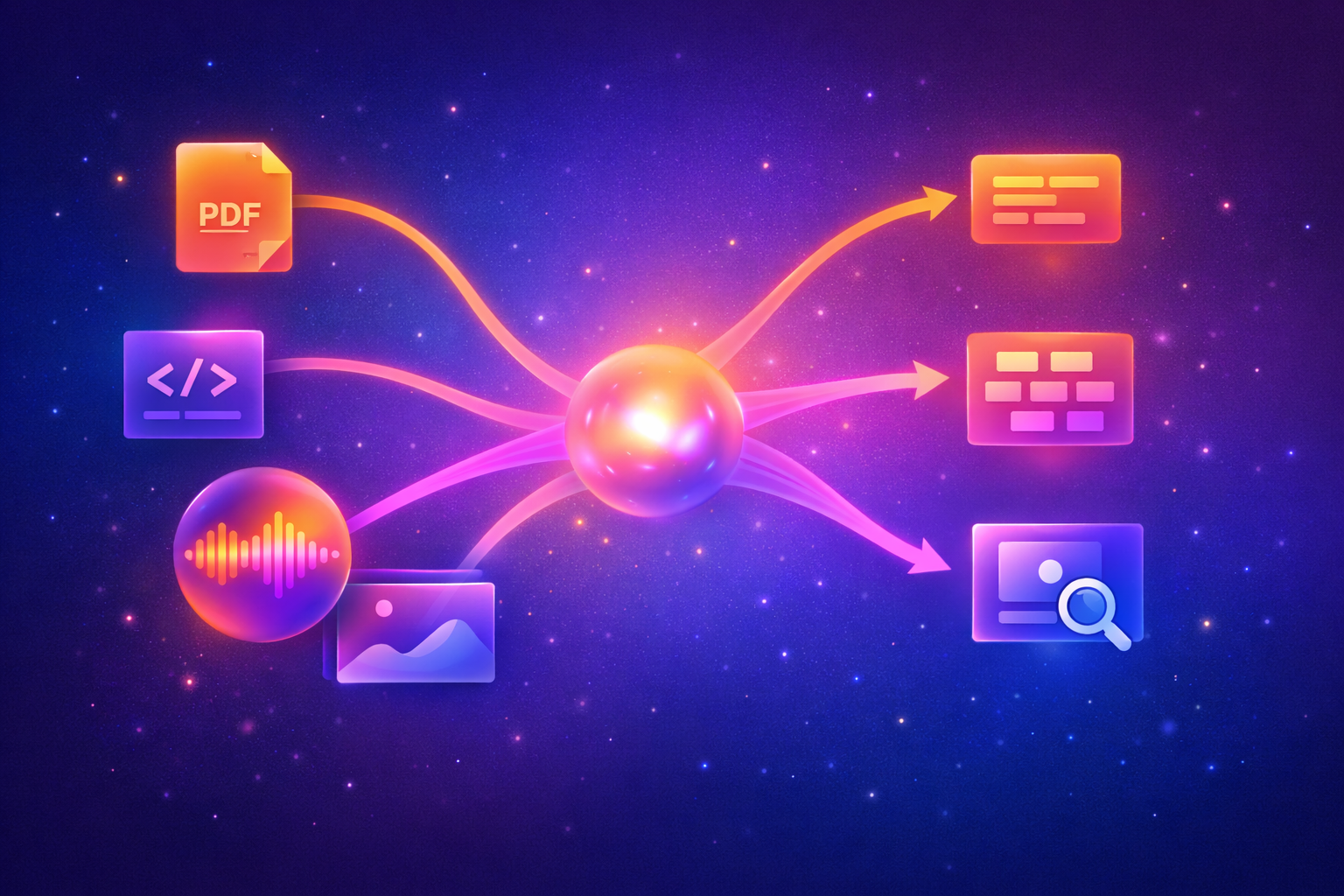

Vision Language Models (VLM)

Vision Language Models represent a different paradigm entirely. Instead of a pipeline that extracts and reconstructs, VLMs process the document as a visual object, the way a human would handle text, layout, and graphics in a single unified pass. They can interpret chart legends, annotated diagrams, and formatting-dependent content that would defeat any extraction-based approach. The trade-off is cost, latency, and accuracy: VLMs are computationally expensive, and at scale, that adds up fast, they are also prone to hallucinations and inaccurate output due to the diversity of what they can predict compared to OCR models. It's also worth noting that not all content is a PDF or a Word document. Code files, audio (MP3), and video (MP4) each require their own specialized parsing layer, AST-based parsers for code to preserve structure and syntax, speech-to-text transcription pipelines for audio, and frame extraction combined with transcription for video. These file types don't fit neatly into the text/layout/VLM spectrum, and treating them as plain text is its own category of parsing failure. In practice, the choice isn't always one or the other. Many teams are converging on hybrid strategies, layout-aware or VLMs for complex, high-value documents where accuracy is critical, and lighter approaches for simpler content where speed and cost matter more.

Choosing the right strategy

Categorize your documents before choosing a parsing approach. The split is roughly: simple text-heavy documents go to traditional or specialized parsing, complex layout-dependent documents need layout-aware or VLM approaches, and most real-world document collections are mixed enough to warrant a hybrid strategy.

At a small scale, you can afford to be liberal with expensive methods. With hundreds of thousands of documents, you need smarter routing. Don't build your proof-of-concept around VLMs for everything and assume the cost will be manageable in production; it usually isn't.

Know your error modes. Traditional parsing fails predictably on visual elements and complex layouts. VLMs fail differently, with more hallucination risk but better contextual understanding. Neither failure mode is acceptable if you're not accounting for it.

And build in flexibility from the start. The right parsing strategy today won't be the right one when your document volume doubles, or your use cases expand. Treat it as an ongoing decision, not a one-time setup.

This is exactly the problem UBIK is built around. Rather than forcing teams to become parsing experts or maintain multiple specialized pipelines, the platform handles document routing automatically, classifying each document on ingestion and sending it through the appropriate pipeline. Simple text documents go through fast, lightweight parsing. Complex layouts with tables and embedded visuals get the layout-aware treatment. Highly visual or unpredictable documents get routed to VLMs. And the platform even supports self-hosting your parser, depending on privacy requirements. You can explore how UBIK handles this end-to-end in our documentation.

Categorize your documents before choosing a parsing approach. The split is roughly: simple text-heavy documents go to traditional or specialized parsing, complex layout-dependent documents need layout-aware or VLM approaches, and most real-world document collections are mixed enough to warrant a hybrid strategy.

At a small scale, you can afford to be liberal with expensive methods. With hundreds of thousands of documents, you need smarter routing. Don't build your proof-of-concept around VLMs for everything and assume the cost will be manageable in production; it usually isn't.

Know your error modes. Traditional parsing fails predictably on visual elements and complex layouts. VLMs fail differently, with more hallucination risk but better contextual understanding. Neither failure mode is acceptable if you're not accounting for it.

And build in flexibility from the start. The right parsing strategy today won't be the right one when your document volume doubles, or your use cases expand. Treat it as an ongoing decision, not a one-time setup.

This is exactly the problem UBIK is built around. Rather than forcing teams to become parsing experts or maintain multiple specialized pipelines, the platform handles document routing automatically, classifying each document on ingestion and sending it through the appropriate pipeline. Simple text documents go through fast, lightweight parsing. Complex layouts with tables and embedded visuals get the layout-aware treatment. Highly visual or unpredictable documents get routed to VLMs. And the platform even supports self-hosting your parser, depending on privacy requirements. You can explore how UBIK handles this end-to-end in our documentation.